Definitions

A programming language is a notation for writing

programs, which are specifications of a computation or

algorithm.

[3] Some, but not all, authors restrict the term "programming language" to those languages that can express

all possible algorithms.

[3][4] Traits often considered important for what constitutes a programming language include:

- Function and target

- A computer programming language is a language used to write computer programs, which involve a computer performing some kind of computation[5] or algorithm and possibly control external devices such as printers, disk drives, robots,[6] and so on. For example, PostScript programs are frequently created by another program to control a computer printer or display. More generally, a programming language may describe computation on some, possibly abstract, machine. It is generally accepted that a complete specification for a programming language includes a description, possibly idealized, of a machine or processor for that language.[7] In most practical contexts, a programming language involves a computer; consequently, programming languages are usually defined and studied this way.[8] Programming languages differ from natural languages in that natural languages are only used for interaction between people, while programming languages also allow humans to communicate instructions to machines.

- Abstractions

- Programming languages usually contain abstractions for defining and manipulating data structures or controlling the flow of execution. The practical necessity that a programming language support adequate abstractions is expressed by the abstraction principle;[9] this principle is sometimes formulated as recommendation to the programmer to make proper use of such abstractions.[10]

- Expressive power

- The theory of computation classifies languages by the computations they are capable of expressing. All Turing complete languages can implement the same set ofalgorithms. ANSI/ISO SQL-92 and Charity are examples of languages that are not Turing complete, yet often called programming languages.[11][12]

Markup languages like

XML,

HTML or

troff, which define

structured data, are not usually considered programming languages.

[13][14][15] Programming languages may, however, share the syntax with markup languages if a computational semantics is defined.

XSLT, for example, is a

Turing complete XML dialect.

[16][17][18] Moreover,

LaTeX, which is mostly used for structuring documents, also contains a Turing complete subset.

[19][20]

The term

computer language is sometimes used interchangeably with programming language.

[21] However, the usage of both terms varies among authors, including the exact scope of each. One usage describes programming languages as a subset of computer languages.

[22] In this vein, languages used in computing that have a different goal than expressing computer programs are generically designated computer languages. For instance, markup languages are sometimes referred to as computer languages to emphasize that they are not meant to be used for programming.

[23]

Another usage regards programming languages as theoretical constructs for programming abstract machines, and computer languages as the subset thereof that runs on physical computers, which have finite hardware resources.

[24] John C. Reynolds emphasizes that

formal specification languages are just as much programming languages as are the languages intended for execution. He also argues that textual and even graphical input formats that affect the behavior of a computer are programming languages, despite the fact they are commonly not Turing-complete, and remarks that ignorance of programming language concepts is the reason for many flaws in input formats.

[25]

History

Main article: Programming Language

Early developments

The second autocode was developed for the Mark 1 by

R. A. Brooker in 1954 and was called the "Mark 1 Autocode". Brooker also developed an autocode for the

Ferranti Mercury in the 1950s in conjunction with the University of Manchester. The version for the

EDSAC 2 was devised by

D. F. Hartley of

University of Cambridge Mathematical Laboratory in 1961. Known as EDSAC 2 Autocode, it was a straight development from Mercury Autocode adapted for local circumstances, and was noted for its object code optimisation and source-language diagnostics which were advanced for the time. A contemporary but separate thread of development,

Atlas Autocode was developed for the University of Manchester

Atlas 1 machine.

Another early programming language was devised by

Grace Hopper in the US, called

FLOW-MATIC. It was developed for the

UNIVAC I at

Remington Rand during the period from 1955 until 1959. Hopper found that business data processing customers were uncomfortable with mathematical notation, and in early 1955, she and her team wrote a specification for an

English programming language and implemented a prototype.

[34] The FLOW-MATIC compiler became publicly available in early 1958 and was substantially complete in 1959.

[35] Flow-Matic was a major influence in the design of

COBOL, since only it and its direct descendant

AIMACO were in actual use at the time.

[36]

Refinement

The increased use of high-level languages introduced a requirement for

low-level programming languages or

system programming languages. These languages, to varying degrees, provide facilities between assembly languages and high-level languages, and can be used to perform tasks which require direct access to hardware facilities but still provide higher-level control structures and error-checking.

The period from the 1960s to the late 1970s brought the development of the major language paradigms now in use:

Each of these languages spawned descendants, and most modern programming languages count at least one of them in their ancestry.

Consolidation and growth

A selection of textbooks that teach programming, in languages both popular and obscure. These are only a few of the thousands of programming languages and dialects that have been designed in history.

The 1980s were years of relative consolidation.

C++ combined object-oriented and systems programming. The United States government standardized

Ada, a systems programming language derived from

Pascal and intended for use by defense contractors. In Japan and elsewhere, vast sums were spent investigating so-called

"fifth generation" languages that incorporated logic programming constructs.

[41] The functional languages community moved to standardize

ML and Lisp. Rather than inventing new paradigms, all of these movements elaborated upon the ideas invented in the previous decades.

One important trend in language design for programming large-scale systems during the 1980s was an increased focus on the use of

modules, or large-scale organizational units of code.

Modula-2, Ada, and ML all developed notable module systems in the 1980s, which were often wedded to

generic programming constructs.

[42]

The rapid growth of the

Internet in the mid-1990s created opportunities for new languages.

Perl, originally a Unix scripting tool first released in 1987, became common in dynamic

websites.

Java came to be used for server-side programming, and bytecode virtual machines became popular again in commercial settings with their promise of "

Write once, run anywhere" (

UCSD Pascal had been popular for a time in the early 1980s). These developments were not fundamentally novel, rather they were refinements of many existing languages and paradigms (although their syntax was often based on the C family of programming languages).

Programming language evolution continues, in both industry and research. Current directions include security and reliability verification, new kinds of modularity (

mixins,

delegates,

aspects), and database integration such as Microsoft's

LINQ.

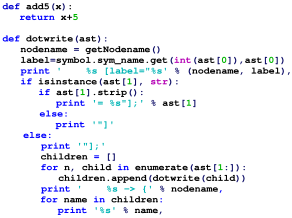

Elements

All programming languages have some

primitive building blocks for the description of data and the processes or transformations applied to them (like the addition of two numbers or the selection of an item from a collection). These primitives are defined by syntactic and semantic rules which describe their structure and meaning respectively.

Syntax

A programming language's surface form is known as its

syntax. Most programming languages are purely textual; they use sequences of text including words, numbers, and punctuation, much like written natural languages. On the other hand, there are some programming languages which are more

graphical in nature, using visual relationships between symbols to specify a program.

The syntax of a language describes the possible combinations of symbols that form a syntactically correct program. The meaning given to a combination of symbols is handled by semantics (either

formal or hard-coded in a

reference implementation). Since most languages are textual, this article discusses textual syntax.

expression ::= atom | list

atom ::= number | symbol

number ::= [+-]?['0'-'9']+

symbol ::= ['A'-'Z''a'-'z'].*

list ::= '(' expression* ')'

This grammar specifies the following:

- an expression is either an atom or a list;

- an atom is either a number or a symbol;

- a number is an unbroken sequence of one or more decimal digits, optionally preceded by a plus or minus sign;

- a symbol is a letter followed by zero or more of any characters (excluding whitespace); and

- a list is a matched pair of parentheses, with zero or more expressions inside it.

The following are examples of well-formed token sequences in this grammar: 12345, () and (a b c232 (1)).

Not all syntactically correct programs are semantically correct. Many syntactically correct programs are nonetheless ill-formed, per the language's rules; and may (depending on the language specification and the soundness of the implementation) result in an error on translation or execution. In some cases, such programs may exhibit

undefined behavior. Even when a program is well-defined within a language, it may still have a meaning that is not intended by the person who wrote it.

Using

natural language as an example, it may not be possible to assign a meaning to a grammatically correct sentence or the sentence may be false:

- "Colorless green ideas sleep furiously." is grammatically well-formed but has no generally accepted meaning.

- "John is a married bachelor." is grammatically well-formed but expresses a meaning that cannot be true.

The following

C language fragment is syntactically correct, but performs operations that are not semantically defined (the operation

*p >> 4 has no meaning for a value having a complex type and

p->im is not defined because the value of

p is the

null pointer):

complex *p = NULL;

complex abs_p = sqrt(*p >> 4 + p->im);

If the

type declaration on the first line were omitted, the program would trigger an error on compilation, as the variable "p" would not be defined. But the program would still be syntactically correct, since type declarations provide only semantic information.

The grammar needed to specify a programming language can be classified by its position in the

Chomsky hierarchy. The syntax of most programming languages can be specified using a Type-2 grammar, i.e., they are

context-free grammars.

[43] Some languages, including Perl and Lisp, contain constructs that allow execution during the parsing phase. Languages that have constructs that allow the programmer to alter the behavior of the parser make syntax analysis an

undecidable problem, and generally blur the distinction between parsing and execution.

[44] In contrast to

Lisp's macro system and Perl's

BEGIN blocks, which may contain general computations, C macros are merely string replacements, and do not require code execution.

[45]

Semantics

The term

Semantics refers to the meaning of languages, as opposed to their form (

syntax).

Static semantics

The static semantics defines restrictions on the structure of valid texts that are hard or impossible to express in standard syntactic formalisms.

[3] For compiled languages, static semantics essentially include those semantic rules that can be checked at compile time. Examples include checking that every

identifier is declared before it is used (in languages that require such declarations) or that the labels on the arms of a

case statement are distinct.

[46] Many important restrictions of this type, like checking that identifiers are used in the appropriate context (e.g. not adding an integer to a function name), or that

subroutine calls have the appropriate number and type of arguments, can be enforced by defining them as rules in a

logic called a

type system. Other forms of

static analyses like

data flow analysis may also be part of static semantics. Newer programming languages like

Javaand

C# have

definite assignment analysis, a form of data flow analysis, as part of their static semantics.

Dynamic semantics

Once data has been specified, the machine must be instructed to perform operations on the data. For example, the semantics may define the

strategy by which expressions are evaluated to values, or the manner in which

control structures conditionally execute

statements. The

dynamic semantics (also known as

execution semantics) of a language defines how and when the various constructs of a language should produce a program behavior. There are many ways of defining execution semantics. Natural language is often used to specify the execution semantics of languages commonly used in practice. A significant amount of academic research went into

formal semantics of programming languages, which allow execution semantics to be specified in a formal manner. Results from this field of research have seen limited application to programming language design and implementation outside academia.

Type system

A type system defines how a programming language classifies values and expressions into

types, how it can manipulate those types and how they interact. The goal of a type system is to verify and usually enforce a certain level of correctness in programs written in that language by detecting certain incorrect operations. Any

decidable type system involves a trade-off: while it rejects many incorrect programs, it can also prohibit some correct, albeit unusual programs. In order to bypass this downside, a number of languages have

type loopholes, usually unchecked

casts that may be used by the programmer to explicitly allow a normally disallowed operation between different types. In most typed languages, the type system is used only to

type check programs, but a number of languages, usually functional ones,

infer types, relieving the programmer from the need to write type annotations. The formal design and study of type systems is known as

type theory.

Typed versus untyped languages

A language is

typed if the specification of every operation defines types of data to which the operation is applicable, with the implication that it is not applicable to other types.

[47]For example, the data represented by

"this text between the quotes" is a

string, and in many programming languages dividing a number by a string has no meaning and will be rejected by the compilers. The invalid operation may be detected when the program is compiled ("static" type checking) and will be rejected by the compiler with a compilation error message, or it may be detected when the program is run ("dynamic" type checking), resulting in a run-time

exception. Many languages allow a function called an exception handler to be written to handle this exception and, for example, always return "-1" as the result.

A special case of typed languages are the

single-type languages. These are often scripting or markup languages, such as

REXX or

SGML, and have only one data type—most commonly character strings which are used for both symbolic and numeric data.

In contrast, an

untyped language, such as most

assembly languages, allows any operation to be performed on any data, which are generally considered to be sequences of bits of various lengths.

[47] High-level languages which are untyped include

BCPL,

Tcl, and some varieties of

Forth.

In practice, while few languages are considered typed from the point of view of

type theory (verifying or rejecting

all operations), most modern languages offer a degree of typing.

[47] Many production languages provide means to bypass or subvert the type system, trading type-safety for finer control over the program's execution (see

casting).

Static versus dynamic typing

In

static typing, all expressions have their types determined prior to when the program is executed, typically at compile-time. For example, 1 and (2+2) are integer expressions; they cannot be passed to a function that expects a string, or stored in a variable that is defined to hold dates.

[47]

Statically typed languages can be either

manifestly typed or

type-inferred. In the first case, the programmer must explicitly write types at certain textual positions (for example, at variable

declarations). In the second case, the compiler

infers the types of expressions and declarations based on context. Most mainstream statically typed languages, such as

C++,

C# and

Java, are manifestly typed. Complete type inference has traditionally been associated with less mainstream languages, such as

Haskell and

ML. However, many manifestly typed languages support partial type inference; for example,

Java and

C# both infer types in certain limited cases.

[48] Additionally, some programming languages allow for some types to be automatically converted to other types; for example, an int can be used where the program expects a float.

Dynamic typing, also called

latent typing, determines the type-safety of operations at run time; in other words, types are associated with

run-time values rather than

textual expressions.

[47] As with type-inferred languages, dynamically typed languages do not require the programmer to write explicit type annotations on expressions. Among other things, this may permit a single variable to refer to values of different types at different points in the program execution. However, type

errors cannot be automatically detected until a piece of code is actually executed, potentially making

debugging more difficult.

Lisp,

Smalltalk,

Perl,

Python,

JavaScript, and

Ruby are dynamically typed.

Weak and strong typing

Weak typing allows a value of one type to be treated as another, for example treating a

string as a number.

[47] This can occasionally be useful, but it can also allow some kinds of program faults to go undetected at

compile time and even at

run time.

Strong typing prevents the above. An attempt to perform an operation on the wrong type of value raises an error.

[47] Strongly typed languages are often termed

type-safe or

safe.

An alternative definition for "weakly typed" refers to languages, such as

Perl and

JavaScript, which permit a large number of implicit type conversions. In JavaScript, for example, the expression

2 * x implicitly converts

x to a number, and this conversion succeeds even if

x is

null,

undefined, an

Array, or a string of letters. Such implicit conversions are often useful, but they can mask programming errors.

Strong and

static are now generally considered orthogonal concepts, but usage in the literature differs. Some use the term

strongly typed to mean

strongly, statically typed, or, even more confusingly, to mean simply

statically typed. Thus

C has been called both strongly typed and weakly, statically typed.

[49][50]

It may seem odd to some professional programmers that C could be "weakly, statically typed". However, notice that the use of the generic pointer, the void* pointer, does allow for casting of pointers to other pointers without needing to do an explicit cast. This is extremely similar to somehow casting an array of bytes to any kind of datatype in C without using an explicit cast, such as (int) or (char).

Standard library and run-time system]

Most programming languages have an associated core

library (sometimes known as the 'standard library', especially if it is included as part of the published language standard), which is conventionally made available by all implementations of the language. Core libraries typically include definitions for commonly used algorithms, data structures, and mechanisms for input and output.

The line between a language and its core library differs from language to language. In some cases, the language designers may treat the library as a separate entity from the language. However, a language's core library is often treated as part of the language by its users, and some language specifications even require that this library be made available in all implementations. Indeed, some languages are designed so that the meanings of certain syntactic constructs cannot even be described without referring to the core library. For example, in

Java, a string literal is defined as an instance of the

java.lang.String class; similarly, in

Smalltalk, an

anonymous function expression (a "block") constructs an instance of the library's

BlockContext class. Conversely,

Scheme contains multiple coherent subsets that suffice to construct the rest of the language as library macros, and so the language designers do not even bother to say which portions of the language must be implemented as language constructs, and which must be implemented as parts of a library.

Design and implementation

Programming languages share properties with natural languages related to their purpose as vehicles for communication, having a syntactic form separate from its semantics, and showing

language families of related languages branching one from another.

[51][52] But as artificial constructs, they also differ in fundamental ways from languages that have evolved through usage. A significant difference is that a programming language can be fully described and studied in its entirety, since it has a precise and finite definition.

[53] By contrast, natural languages have changing meanings given by their users in different communities. While

constructed languages are also artificial languages designed from the ground up with a specific purpose, they lack the precise and complete semantic definition that a programming language has.

Many programming languages have been designed from scratch, altered to meet new needs, and combined with other languages. Many have eventually fallen into disuse. Although there have been attempts to design one "universal" programming language that serves all purposes, all of them have failed to be generally accepted as filling this role.

[54] The need for diverse programming languages arises from the diversity of contexts in which languages are used:

- Programs range from tiny scripts written by individual hobbyists to huge systems written by hundreds of programmers.

- Programmers range in expertise from novices who need simplicity above all else, to experts who may be comfortable with considerable complexity.

- Programs must balance speed, size, and simplicity on systems ranging from microcontrollers to supercomputers.

- Programs may be written once and not change for generations, or they may undergo continual modification.

- Programmers may simply differ in their tastes: they may be accustomed to discussing problems and expressing them in a particular language.

One common trend in the development of programming languages has been to add more ability to solve problems using a higher level of

abstraction. The earliest programming languages were tied very closely to the underlying hardware of the computer. As new programming languages have developed, features have been added that let programmers express ideas that are more remote from simple translation into underlying hardware instructions. Because programmers are less tied to the complexity of the computer, their programs can do more computing with less effort from the programmer. This lets them write more functionality per time unit.

[55]

A language's designers and users must construct a number of artifacts that govern and enable the practice of programming. The most important of these artifacts are the language specification and implementation.

Specification

The specification of a programming language is an artifact that the language

users and the

implementors can use to agree upon whether a piece of

source code is a valid

program in that language, and if so what its behavior shall be.

A programming language specification can take several forms, including the following:

- An explicit definition of the syntax, static semantics, and execution semantics of the language. While syntax is commonly specified using a formal grammar, semantic definitions may be written in natural language (e.g., as in the C language), or a formal semantics (e.g., as in Standard ML[58] and Scheme[59] specifications).

- A description of the behavior of a translator for the language (e.g., the C++ and Fortran specifications). The syntax and semantics of the language have to be inferred from this description, which may be written in natural or a formal language.

- A reference or model implementation, sometimes written in the language being specified (e.g., Prolog or ANSI REXX[60]). The syntax and semantics of the language are explicit in the behavior of the reference implementation.

Implementation

An

implementation of a programming language provides a way to write programs in that language and execute them on one or more configurations of hardware and software. There are, broadly, two approaches to programming language implementation:

compilation and

interpretation. It is generally possible to implement a language using either technique.

The output of a

compiler may be executed by hardware or a program called an interpreter. In some implementations that make use of the interpreter approach there is no distinct boundary between compiling and interpreting. For instance, some implementations of

BASIC compile and then execute the source a line at a time.

Programs that are executed directly on the hardware usually run several orders of magnitude faster than those that are interpreted in software.

[citation needed]

One technique for improving the performance of interpreted programs is

just-in-time compilation. Here the

virtual machine, just before execution, translates the blocks of

bytecode which are going to be used to machine code, for direct execution on the hardware.

Proprietary languages

Although most of the most commonly used programming languages have fully open specifications and implementations, many programming languages exist only as proprietary programming languages with the implementation available only from a single vendor, which may claim that such a proprietary language is their intellectual property. Proprietary programming languages are commonly

domain specific languages or internal

scripting languages for a single product; some proprietary languages are used only internally within a vendor, while others are available to external users.

Many proprietary languages are widely used, in spite of their proprietary nature; examples include

MATLAB and

VBScript. Some languages may make the transition from closed to open; for example,

Erlang was originally an Ericsson's internal programming language.

Usage

Thousands of different programming languages have been created, mainly in the computing field.

[61] Software is commonly built with 5 programming languages or more.

[62]

Programming languages differ from most other forms of human expression in that they require a greater degree of precision and completeness. When using a natural language to communicate with other people, human authors and speakers can be ambiguous and make small errors, and still expect their intent to be understood. However, figuratively speaking, computers "do exactly what they are told to do", and cannot "understand" what code the programmer intended to write. The combination of the language definition, a program, and the program's inputs must fully specify the external behavior that occurs when the program is executed, within the domain of control of that program. On the other hand, ideas about an algorithm can be communicated to humans without the precision required for execution by using

pseudocode, which interleaves natural language with code written in a programming language.

A programming language provides a structured mechanism for defining pieces of data, and the operations or transformations that may be carried out automatically on that data. A

programmer uses the

abstractions present in the language to represent the concepts involved in a computation. These concepts are represented as a collection of the simplest elements available (called

primitives).

[63] Programming is the process by which programmers combine these primitives to compose new programs, or adapt existing ones to new uses or a changing environment.

Measuring language usage

It is difficult to determine which programming languages are most widely used, and what usage means varies by context. One language may occupy the greater number of programmer hours, a different one have more lines of code, and a third may consume the most CPU time. Some languages are very popular for particular kinds of applications. For example,

COBOL is still strong in the corporate data center, often on large

mainframes;

[65][66] Fortran in scientific and engineering applications;

Ada in aerospace, transportation, military, real-time and embedded applications; and

C in embedded applications and operating systems. Other languages are regularly used to write many different kinds of applications.

Various methods of measuring language popularity, each subject to a different bias over what is measured, have been proposed:

- counting the number of job advertisements that mention the language[67]

- the number of books sold that teach or describe the language[68]

- estimates of the number of existing lines of code written in the language – which may underestimate languages not often found in public searches[69]

- counts of language references (i.e., to the name of the language) found using a web search engine.

Taxonomies

There is no overarching classification scheme for programming languages. A given programming language does not usually have a single ancestor language. Languages commonly arise by combining the elements of several predecessor languages with new ideas in circulation at the time. Ideas that originate in one language will diffuse throughout a family of related languages, and then leap suddenly across familial gaps to appear in an entirely different family.

The task is further complicated by the fact that languages can be classified along multiple axes. For example, Java is both an object-oriented language (because it encourages object-oriented organization) and a concurrent language (because it contains built-in constructs for running multiple

threads in parallel).

Python is an object-oriented

scripting language.

In broad strokes, programming languages divide into

programming paradigms and a classification by

intended domain of use, with

general-purpose programming languagesdistinguished from

domain-specific programming languages. Traditionally, programming languages have been regarded as describing computation in terms of imperative sentences, i.e. issuing commands. These are generally called

imperative programming languages. A great deal of research in programming languages has been aimed at blurring the distinction between a program as a set of instructions and a program as an assertion about the desired answer, which is the main feature of

declarative programming.

[71] More refined paradigms include

procedural programming,

object-oriented programming,

functional programming, and

logic programming; some languages are hybrids of paradigms or multi-paradigmatic. An

assembly language is not so much a paradigm as a direct model of an underlying machine architecture. By purpose, programming languages might be considered general purpose,

system programming languages, scripting languages, domain-specific languages, or concurrent/distributed languages (or a combination of these).

[72] Some general purpose languages were designed largely with educational goals.

[73]